Workflow

A workflow in bioinformatics can be defined as a series of computational or data manipulation steps. To compose and execute such sequences a variety of special workflow management systems has been developed. All such systems are based on an abstract representation of how a computation proceeds in the form of a directed graph, where each node represents a task to be executed and edges represent either data flow or execution dependencies between different tasks. Each system typically provides visual front-end allowing the user to build and modify complex applications with little or no programming expertise.

BioUML provides a workflow management system, which is intuitively handled through a simple drag-and-drop interface. With BioUML-related products users can either run pre-defined workflows or create their own for specific analysis purposes.

In BioUML the workflow is a special ![]() diagram type, so you can edit it using the diagram document.

diagram type, so you can edit it using the diagram document.

Contents |

Pre-defined workflows

BioUML, and especially the geneXplain platform, facilitates standard analyses through a number of pre-composed workflows concatenating some of the most important modules, which also allows users to start with their first analyses right away, even before having learned all sophisticated details of the platform.

With any workflow the following steps are normally taken:

- importing data into the project (if necessary),

- data normalization (if necessary),

- selecting the appropriate workflow,

- specifying the input file(s),

- parameters setting,

- specifying the output directory,

- running the workflow,

- viewing the output.

A few pre-defined workflows in BioUML workbench are available as example data (e.g. Data tab in navigation pane > data > Examples > ChIPMunk workflows). GeneXplain offers a somewhat richer choice of ready-made workflows (see the list of workflows below) with all the links launching them right from the start page.

Creating your own workflows

To create a new workflow in BioUML workbench you first need to go to the Data folder (or a subfolder within) of your project in which the new workflow will be stored (e.g. Data tab in navigation pane > data > Collaboration > test project > Data folder). As soon as you click on the Data folder in the navigation pane, a number of operation icons appears in the navigation toolbar. The names of the operations are shown as tooltip text (just place the mouse pointer over an icon). Click on the New workflow option.

To create a workflow in geneXplain go to the Start page and click Create your own workflow under the list of pre-defined workflow groups.

At this point you will be asked to specify the name of the new workflow in the pop-up dialog box. Here you can also choose a different location to save the workflow by navigating the directories tree and creating (sub)folders.

As you press Ok in the dialog box, a new tab opens in the workspace, where you can design a new workflow diagram. The workflow diagram represents different analysis functions being connected by input and output files. The resulting directed graph visualizes the sequence of analysis steps in the workflow. The diagram also may contain parameters, which are to be defined by the user.

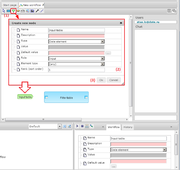

You can add nodes to the graph by dragging and dropping items directly from the Analyses tab of the navigation pane (Analyses tab > analyses > Methods > ...) or by using the toolbox within the tab. The toolbox contains icons for such types of nodes as analysis methods, analysis parameters, analysis expressions, cycles and analysis scripts as well as for the Select tool, directed edges, notes and note edges. To add a node from the toolbox click first on the icon, then within the workspace, set parameters in the Create new node dialog box and press Ok - the node will appear in the graph. To add an edge just click on the icon in the toolbox, specify the output and input nodes in the Create new edge dialog box and press Ok.

Upon clicking on any component of the workflow you can see the information about this particular element in the operations field below.

Now, let's consider the following step-by-step example of creating a simple workflow for filtering table data.

Six steps to compose a simple workflow

Suppose you have specified the name and directory for your new workflow and its tab is active in the workspace,

the first step is to add the analysis function to the workflow diagram. You can either drag and drop the Filter table method from the subdirectory Methods/Data in the Analyses tab of the navigation pane, or add the analysis-method item from the toolbox and choose Data/Filter table from the drop-down list of the Create new node dialog box. The item will appear on the diagram as a light blue rectangle labelled "Filter table".

Step 2. For creating the input table click on the green element in the tool bar, locate the cursor in the Work Space where you would like to put this element and

click. A new window Create new node will pop up, where you are to define the parameters of the element:

- Name field: the title of the element;

- Type: select “Data element”, for any objects like tables.

- In the field Default value you can type a full folder path where the table is located. You can also use some global variables, like “$project$” that already contain the full path (by clicking on the “…” button you can access all the global variables defined for this workflow.

- Rank (sort order): this number gives the position of this input element in the list of all elements upon starting the workflow.

- Role: "Input", since we are using this element for inputting a table into the workflow.

The selected item will appear on the diagram as a light green arrow-like pentagon labelled according to the Name parameter.

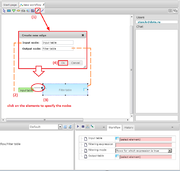

Step 3. Joining elements on the diagram is done by clicking on the arrow symbol in the toolbar - a new window Create new edge will pop up. By clicking on the Table element in the workspace you select it as the Input node for this edge. Similarly, you click on the input path (port) in the Filter table element to select it as the Output node of this new edge. After pressing OK a new connection (edge) appears.

Step 4. The same way you can create now an output table element on the diagram by selecting the yellow element in the tool bar (since it is going to be an intermediate table for further use in the next steps of the workflow) and connecting it with the output icon of the Filter table element. In the Expression field you can now use a new global variable “$Table$” which will contain, during the run of the workflow, the name of the table which you have entered.

So in this case we are creating a new name for the future output table “$Table$ filtered” by adding to the name of the input table an ending “filtered”.

As a result, we have now created one step of the workflow.

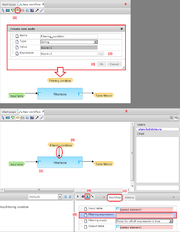

Step 5. To filter data, it's necessary to define a filtering condition. For that create a new element Filtering condition (yellow element in the tool bar), which will be now of simple string type and which contains a filtering condition “Score > 2” in the Expression field. A new element Filtering condition is created.

This element should now be connected to the analysis function Filter table in order to define the filtering condition that is going to be applied at this step of the workflow. To do that, click first on the Filter table element and open the parameters of this element in the operations field (the Workflow tab). Then, click on the field Filtering expression on the parameter list to select it (the blue background color indicates that the field is selected). Click on the Bind property to variable icon in the toolbar of the operations field. And after that, move the cursor to the workspace and click on the Filtering condition element on the diagram - the filtering condition parameter gets now connected to the corresponding field of the Filter table function.

Step 6. The workflow is now ready to be executed. To start the workflow please click on the Run workflow button (![]() ) in the toolbar of the operations field.

In the pop-up menu Workflow parameters you are to specify the input table. Navigate to the folder with your tables and select a table which has a column “Score” and press OK. The workflow will be executed and a new table with a new name and the appendix “filtered” will be created in the same folder as the input table.

) in the toolbar of the operations field.

In the pop-up menu Workflow parameters you are to specify the input table. Navigate to the folder with your tables and select a table which has a column “Score” and press OK. The workflow will be executed and a new table with a new name and the appendix “filtered” will be created in the same folder as the input table.

Complex workflows

More complex workflows are created by adding next workflow steps and by connecting them through a common data element. As shown in the figure, the output element Table filtered of the first step is used as the input element of the second step of the workflow Regulator search. You can also see that a new input parameter Species has been added, which appears now among the workflow parameters upon starting the workflow. With this, you can select a taxonomic species (presently human, mouse or rat) for the table you are going to run through the workflow.

Note: During execution of a workflow a “research diagram” is saved (you can specify the name of this diagram before starting of the workflow).

The research diagram contains the history of the workflow execution with the names of all input and output files. It also contains all the links to these tables, so that you can easily open them by clicking on the respective element in the diagram.

Cycles and scripts

Another element available for composing workflows is cycle. It can be created using the cycle button in the toolbox. In the Create new node dialog box it’s necessary to specify the Name of the cycle (the “cycle variable”), and to choose the appropriate Type and Cycle type.

The option “All elements in collection” (Cycle type) together with the Type “Data element” and some folder name in the field Expression means that all data elements from that folder will be taken one by one as cycle variable values. For example, when selecting Cycle type “Table columns”, Expression should specify the name of this table. Or when choosing Cycle type “Range (from..to)” for Type “Integer number” and Expression as “2..6”, the cycle will be executed by assigning the values 2,3,4,5 and 6 to the cycle variable.

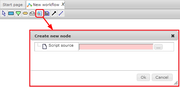

The script workflow element represents a code written in JavaScript, which can be executed during the workflow run. To add a script user should press the analysis-script toolbox icon, click on the proper place in the workflow diagram area and type JavaScript code in the Script source field.

All variables defined in a workflow (green and orange boxes) are available inside the script as JavaScript variables. If the name doesn’t contain spaces, it can be used as it is, the name should be put into $["…"] otherwise. For example, the variable “TableColumn” can be accessed from a script either by name TableColumn or by $["TableColumn"], but “Table column N1” should be accessed as $["Table column N1"].

It's also possible to change the workflow variable from the script. To achieve this you should perform the following steps:

- Create an arrow which starts from the script workflow element and ends in variable node you want to change. Only variables connected with such arrows can be written.

- Use

$["<VariableName>"] = <new_value>in the script. Implicit type conversion may be performed if necessary.

Example 1. Print column names

In this example the data element parameter InputTable (shown as a green box) is first added to the workflow. Then a cycle with the following settings is added:

- Name: TableColumn

- Type: String

- Cycle type: Table columns

- Expression: $InputTable$.

Note that expression is set to $InputTable$ for a cycle.

Then a script element with following code will be created inside a cycle:

print(TableColumn);

When the Run button is pressed, the workflow will ask for a table path, and then will print all column names into the workflow output log.

Example 2. Run GO classification for all tables in a folder

This workflow (see the figure) contains three input elements: InputFolder, where one or more Tables should be placed; ResultFolder that will be created by the method Create folder, and the data element Species, required for table conversion and functional classification.

The cycle has following settings:

- Name: Table

- Type: Data element

- Cycle type: All elements in collection

- Expression: $InputFolder$

Here, the cycle variable named Table will adopt the names of the tables in the InputFolder. Then it goes to the method Convert table, and identifiers are converted to Ensembl genes, according to the analysis settings below:

- Input type: (Auto)

- Output type: Genes:Ensemble

- Species: Human (Homo sapiens)

- Name of main column: (no column)

- Aggregator for numbers: average

The conversion result is taken as the input set for the Functional classification analysis:

- Source data set: <same as the output table at the previous step>

- Species: Human (Homo sapiens)

- Classification: Full gene ontology classification

- Minimal hits to group: 2

- Only over-represented: V (yes)

- P-value threshold: 0.05

The output of the functional classification is a data element named GOres with Expression $ResultsFolder$/$Table/name$ GO.

When the Run button is pressed, the workflow will start.